About Me

I am currently a final-year undergraduate student at Beijing Institute of Technology (BIT), and will begin my Ph.D. at The University of Hong Kong (HKU) in Fall 2026. I am also a Visiting Research Student at the Big Data Institute (BDI), The Hong Kong University of Science and Technology (Guangzhou) (HKUST GZ), supervised by Prof. Yongqi Zhang.

My research focuses on Video Understanding, World Models, and Agentic Multimodal AI. I am particularly interested in enabling multimodal agents to imagine future states before answering or acting, by integrating predictive world models into multimodal reasoning and decision-making systems.

I am currently a Research Intern at Tencent Yuanbao (CSIG), working on Multimodal RAG and agentic multimodal reasoning.

If you have internship opportunities related to Video Understanding & Generation, please feel free to reach out to me via email!

Email: enjundu.cs@gmail.com

- [May. 2026] I was awarded the Beijing Outstanding Graduate and Outstanding Graduate of Beijing Institute of Technology

- [Apr. 2026] I was honored to receive the Xu Teli Scholarship , the highest honor at Beijing Institute of Technology (RMB 50,000).

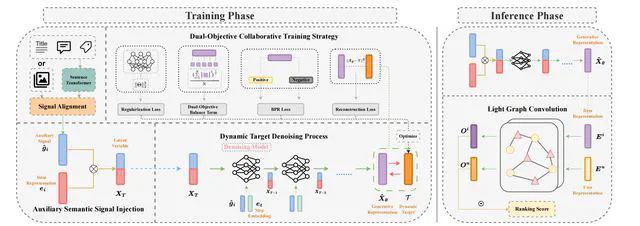

- [Apr. 2026] SemDiff accepted by IEEE Transactions on Knowledge and Data Engineering (CCF-A) , thanks to all co-authors!

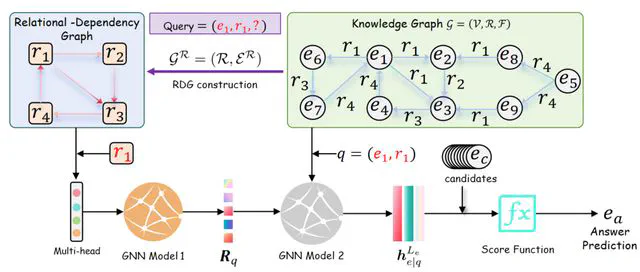

- [Mar. 2026] RED-GNN accepted by Artificial Intelligence Journal (CCF-A) , thanks to all co-authors!

- [Mar. 2026] CaDDiSR accepted by ACM Transactions on Intelligent Systems and Technology (TIST) , thanks to all co-authors!

- [Mar. 2026] GraphScholar accepted by ICLR 2026 Workshop on AI for Mechanism Design and Strategic Decision Making, thanks to all co-authors!

- [Jan. 2026] Xiao Zhen I now have a little kitten named (meaning “my little treasure”) Welcome home, Du Xiao Zhen!

- [Nov. 2025] GraphOracle accepted at AAAI 2026 as an Oral presentation. See you in Singapore!

- [Sep. 2025--present] I'm now keeping a healthy work-life balance. Please reach out to me only during working hours :)

- [Nov. 2025] I received the Outstanding Student Model Award from Beijing Institute of Technology (the only one in the college)!

- [Oct. 2025] Honored to receive the National Scholarship of China , awarded to the top 0.2% of university students nationwide!

- [Sep. 2025] I received the First-Class Scholarship from Beijing Institute of Technology!

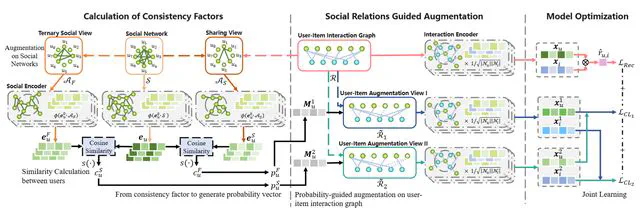

- [Sep. 2025] BHSBR accepted by IEEE Transactions on Big Data, thanks to all co-authors!

- [Sep. 2025] I will join Tencent CSIG in Oct. 2025 for a one-year research internship, based in Tencent Headquarters Tower Shenzhen. Looking forward to doing some interesting work!

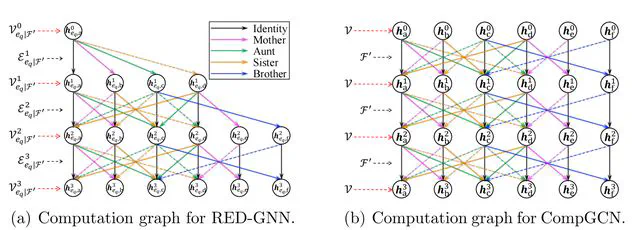

- [Sep. 2025] GraphMaster accepted at NeurIPS 2025 Spotlight. See you in San Diego, USA!

- [Sep. 2025] talented lady I am honored to start a relationship with a . Grateful to fate for bringing us together!

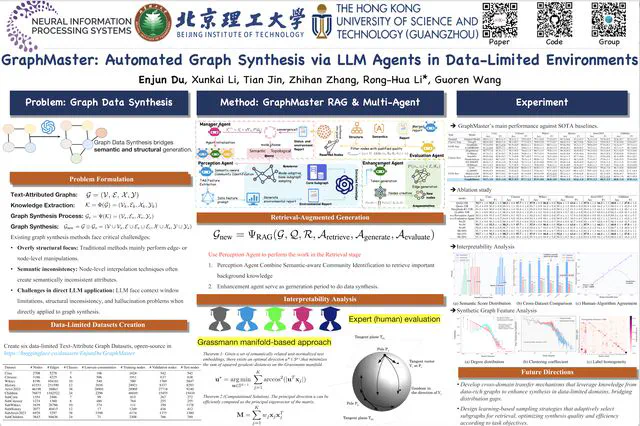

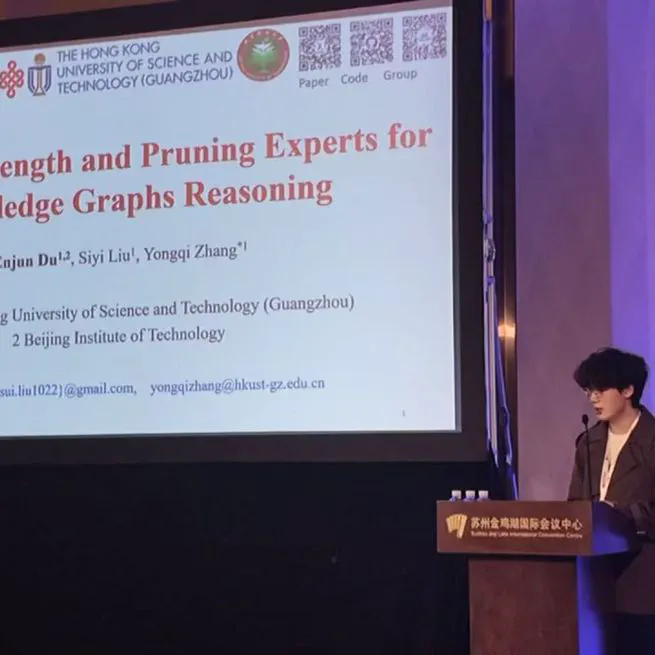

- [Sep. 2025] Mixture of Length and Pruning Experts for Knowledge Graphs Reasoning (MoKGR) I will attend EMNLP 2025 in Suzhou and give an oral presentation of our paper . Looking forward to meeting everyone!

- [Aug. 2025] I am actively seeking Ph.D. opportunities for Fall 2026 admission, especially in the areas of Graph for LLM, LLM Reasoning, and Multi-Modal Data-Mining. Please feel free to reach out if you are interested in potential collaboration or supervision!

- [Aug. 2025] MoKGR accepted at EMNLP 2025 main conference, thanks to Prof. Zhang for the guidance. See you in Suzhou!

- [Aug. 2025] I will attend IJCAI 2025 in Guangzhou and give an oral presentation of our paper ADC-GS: Anchor-Driven Deformable and Compressed Gaussian Splatting for Dynamic Scene Reconstruction . Looking forward to meeting everyone!

- [Jun. 2025] Officially joined The Hong Kong University of Science and Technology (Guangzhou) as a Visiting Research Student until July 1, 2026. Looking forward to meet more with HKUST(GZ) peers!

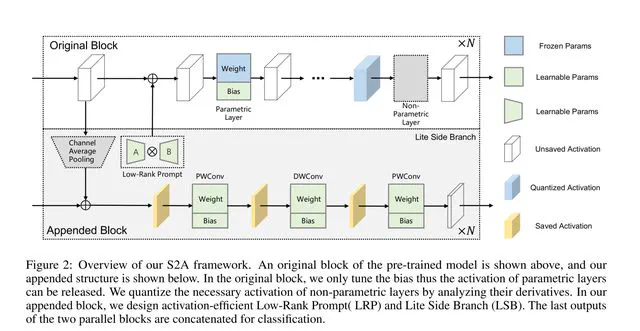

- [Mar. 2025] S2A accepted at CVPR 2025 Workshop, thanks to my co-authors!

- [Feb. 2025] I received the First-Class Scholarship from Beijing Institute of Technology!

- [Nov. 2024] DSVC accepted by IEEE TCSS, thanks to all co-authors!

- [Oct. 2024] I received the Outstanding Student Award from Beijing Institute of Technology!

- [Sep. 2024] I received the Second-Class Scholarship from Beijing Institute of Technology!

- [Feb. 2024] I received the Second-Class Scholarship from Beijing Institute of Technology!

- [Oct. 2023] I received the Outstanding Student Award from Beijing Institute of Technology!

- [Sep. 2023] I received the Outstanding Student Leader Award from Beijing Institute of Technology!

- [Feb. 2023] I received the Second-Class Scholarship from Beijing Institute of Technology!

- Video Understanding

- Video Generation

- Agentic MLLM Reasoning

The Hong Kong University of Science and Technology

Visiting Student

Beijing Institute of Technology

Bachelor of Cyberspace Science and Technology

My research lies at the intersection of Video Understanding, World Models, and Agentic Multimodal AI. Concretely, I study how world models can serve as internal simulators for multimodal agents, enabling them to mentally roll out future visual trajectories, infer hidden dynamics, and evaluate potential actions before committing to a response or decision. My research spans video understanding and reasoning, predictive video generation, world model learning, and agentic multimodal systems. My long-term vision is to make imagination a first-class component of multimodal intelligence, enabling agents that are not only more capable, but also more faithful, grounded, and compute-efficient—reliable enough to be trusted in long-horizon real-world decision making under uncertainty.

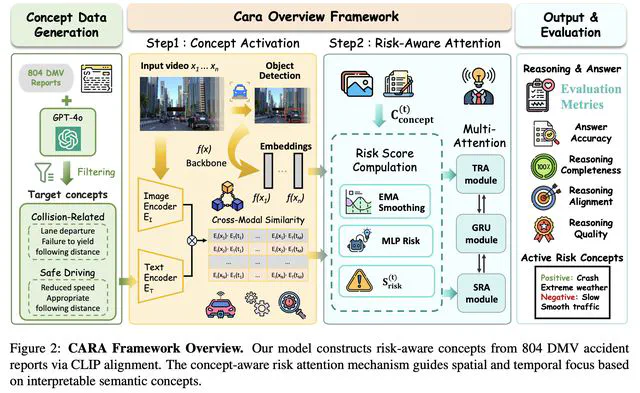

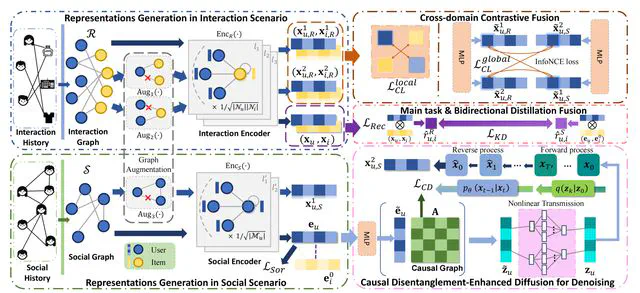

Here are some of my research works.

Click the Cite button above to demo the feature to enable visitors to import publication metadata into their reference management software.

May 8, 2026

Participated in the final defense of the Xu Teli Scholarship, the highest honorary scholarship at Beijing Institute of Technology (BIT), awarded with a scholarship prize of RMB 50,000.

Apr 19, 2026

Click the Cite button above to demo the feature to enable visitors to import publication metadata into their reference management software.

Mar 8, 2026

Click the Cite button above to demo the feature to enable visitors to import publication metadata into their reference management software.

Feb 8, 2026

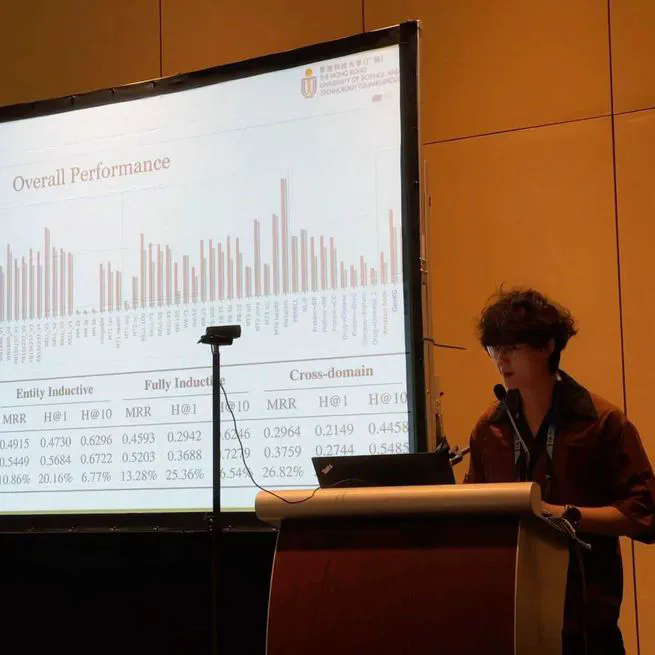

Oral presentation at AAAI 2026 on our paper "GraphOracle: Efficient Fully-Inductive Knowledge Graph Reasoning via Relation-Dependency Graphs".

Jan 25, 2026

Oral presentation at EMNLP 2025 on our paper "Mixture of Length and Pruning Experts for Knowledge Graphs Reasoning".

Nov 5, 2025

📜 Patents

Reply Generation Method, Apparatus, Computer Device, and Storage Medium

Enjun Du, Xinyu Zuo, Lisheng Duan, Yongqi Zhang

Image Retrieval Method, Apparatus, Computer Device, Storage Medium, and Product

Enjun Du, Xinyu Zuo, Lisheng Duan, Ruiwen Tao, Yongqi Zhang

A Knowledge Graph Reasoning Method, Apparatus, Device, and Medium

Yongqi Zhang, Enjun Du, Siyi Liu

A Training Method for Retrieval Models and Related Apparatus

Siyi Liu, Enjun Du, Zirong Chen, Xinyu Zuo, Lisheng Duan, Yongqi Zhang

A Data Processing Method, Apparatus, Electronic Device, and Readable Medium

Zirong Chen, Fuda Ye, Enjun Du, Junfu Pu, Xinyu Zuo, Yongqi Zhang

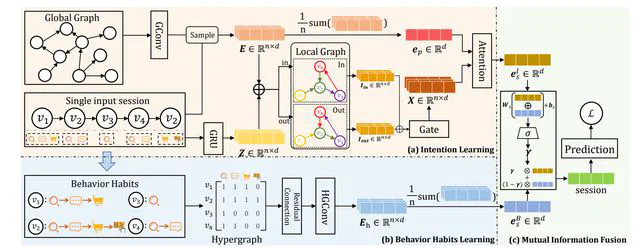

Intent-Aware Session Recommendation Based on Behavior-Enhanced Hypergraph Modeling

Wenhao Xue, Zhida Qin, Haoyao Zhang, Shixiao Yang, Enjun Du, Xingbo Tian, Shuang Li, Tianyu Huang

Chinese National Invention Patent, CN119180288A, published December 2024.

- Journals: ACM TIST, IEEE TCSS

- Conferences: ACL Rolling Review, ICML, NeurIPS, AAAI, ICLR

Experience

Tencent Company

Research Intern

Education

The Hong Kong University of Science and Technology

Visiting StudentSupervised by Prof.Yongqi ZhangBeijing Institute of Technology

Bachelor of Cyberspace Science and TechnologyGPA: 91.7/100, Rank: 1%Read Thesis

Research Assistant in Ronghua Li’s Lab, supervised by Prof.Ronghua Li and Dr.Xunkai Li from November 2024 to August 2025.

Research Intern in Zhida Qin’s Lab, supervised by Prof.Zhida Qin form September 2023 to December 2025.

Xu Teli Scholarship (Highest Honor, RMB 50,000)

Beijing Outstanding Graduate & Outstanding Graduate of Beijing Institute of Technology

China’s National Scholarship

I genuinely believe that meaningful progress in academia stems from open dialogue and thoughtful debate. If you have any questions about my research–or if you’ve previously contacted me through GitHub issues and haven’t received a response–please feel free to reach out via email. I’m always happy to chat, collaborate, or offer assistance where I can.

Throughout my academic journey, I’ve been fortunate to receive support and inspiration from many generous people. I’m always eager to give back to the community and engage with others passionate about learning and discovery.

Preferred Email:

Optional Email:

Please avoid contacting me at enjun_du@bit.edu.cn, as I will no longer have access to this address.